5 Foolproof Data Loading Tricks Salesforce Admins Must Know

Salesforce

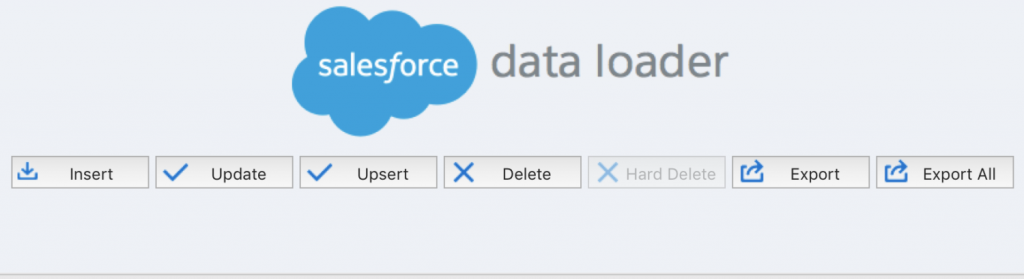

Salesforce data loading can be a daunting task for new Salesforce admins. Whether you’re inserting, updating, upserting, or deleting, your overall actions are touching a lot of records in your org, which can be intimidating. However, if you follow the right best practices, data loads can be completed successfully and with less stress overall. We’ve outlined 5 foolproof data loading tricks below so you can start completing data loads with more confidence today!

Turn Off Configurations That May Fire On Your Data Load

Depending on the type of data load you are running, a lot of existing records can be touched. With this being the case, you will want to proactively temporarily deactivate any configuration that could accidentally fire on the creation or update of a record. This includes configurations like Workflow Rules and Process Builders that are set to fire when any change is made to a record, or when a record is created for the first time. Temporarily deactivating configuration will allow you to complete your data load without firing any accidental changes in your org. Don’t forget to reactivate when you complete the data load!

Look for rules with this type of criteria

Plan Salesforce Data Loading For Off Business Hours

Another useful Salesforce data loading trick is to always run your data loads off hours from when normal business operations are ran. It is important to do this especially if you have deactivated some configuration from firing on the creation of a new record. For example, if you have a Workflow Email Alert that fires a specific email for when a new Lead enters your system, you may have deactivated that configuration for your deployment. You will want to conduct the data load at a time where it is less likely for new Leads to enter your system so that you minimize the chances of a new Lead getting created and not receiving that certain email.

Practice Salesforce Data Loading in a Full Sandbox First

Practice makes perfect, and with Salesforce data loading, it is vital. If you can, practice your data load in a Full Sandbox environment so you can see what will actually happen to the records and org after completion. Practicing in a Sandbox helps you see if you accidentally fire configuration during the data load, and also what errors may prevent the records from uploading or updating properly, like a Validation Rule. Not only will practicing in a Full Sandbox help decreases stress during the actual data load, but it will also save time down the road. By addressing errors prior to a data load, you will understand what changes or deactivations need to be made to your org to ensure a smooth and quick deployment.

Here are some tips on how to utilize your Salesforce Sandbox experience

Use an 18 Digit Id Instead of 15

In Salesforce, records have unique identifiers that are 15 digits. These unique 15 digit ids are case sensitive, as in the upper and lowercase characters matter. For example, these are two different records:

5003000000D8tyr

5003000000D8tyR

This works in Salesforce, but when you are conducting data manipulation or cleansing, a common first step in a data load, many programs cannot take case sensitivity into account. For example, without proper helper functions that may slow down your program, Microsoft Excel cannot detect case sensitivity and would consider the two records above the same. Luckily, records also have an 18 digit id that is completely unique to the record and solves the case sensitivity problem in Excel. You should use this Id when you are doing any form of data manipulation in Excel prior to a data load. Use a converter tool to get a record’s 18 digit id.

Smaller Batch Sizes

When using the Salesforce Data Loader for your data load, records are processed in incremental batches. This means that Salesforce will run the data load for the number of records you specify, like 10 or 100. The maximum batch size you can run is 200 records, but it is not always wise to run batches that large.

Sometimes, depending on the setup of your org, Salesforce can’t process a large number of changes to records at one time, which results in an error stating “Error: System.Exception: Too many SOQL queries”. To combat this, decrease your batch size by clicking “Settings”. Although your data load will take longer to run, you will hit fewer errors and have a smoother data load overall.